“The reports of my death are greatly exaggerated.”

—Mark Twain

A colleague recently asked for my thoughts on synthetic data in AI. For someone who spends so much time talking about the importance of human values in AI training data and the necessity of having humans involved, you’d think I might be skeptical. I mean, synthetic data? Why would we need it when there’s so much real-world data around us?

Every day, we have access to endless streams of data. Satellites capture imagery of the entire planet, our devices generate text, audio, and video, and governments provide massive datasets via APIs. So, what’s the appeal of synthetic data? Is it a shiny new toy, or does it have a real place in the AI world?

The answer is a little bit of both. While synthetic data has its perks, it won’t replace human-in-the-loop labeling anytime soon. The key is finding the right balance between the two, and making sure we use synthetic data wisely.

“No respect, I don’t get no respect.”

—Rodney Dangerfield

Let’s start by talking about data labeling—because, frankly, it doesn’t get the respect it deserves. Data labeling is the backbone of AI, the unsung hero that makes everything work. It’s not glamorous, but it’s absolutely critical. Like Rodney Dangerfield, it’s often overlooked, but it’s a job that requires real skill and precision. Proper data labeling ensures that AI models perform accurately and reliably.

In the AI world, labeling is essential. Without it, your AI model could be running on fumes. Data labeling can make or break your project. That’s why I always ask two key questions when starting any AI project: What does your data look like, and what does success look like? These questions lead to a clear understanding of the training data strategy. And the truth is, you can spend more on labeling data than on other aspects of your AI system if it’s not done correctly.

But where does synthetic data come into play?

“It’s not what we don’t know that gets us in trouble. It’s what we do know for sure that just ain’t so.”

—Mark Twain

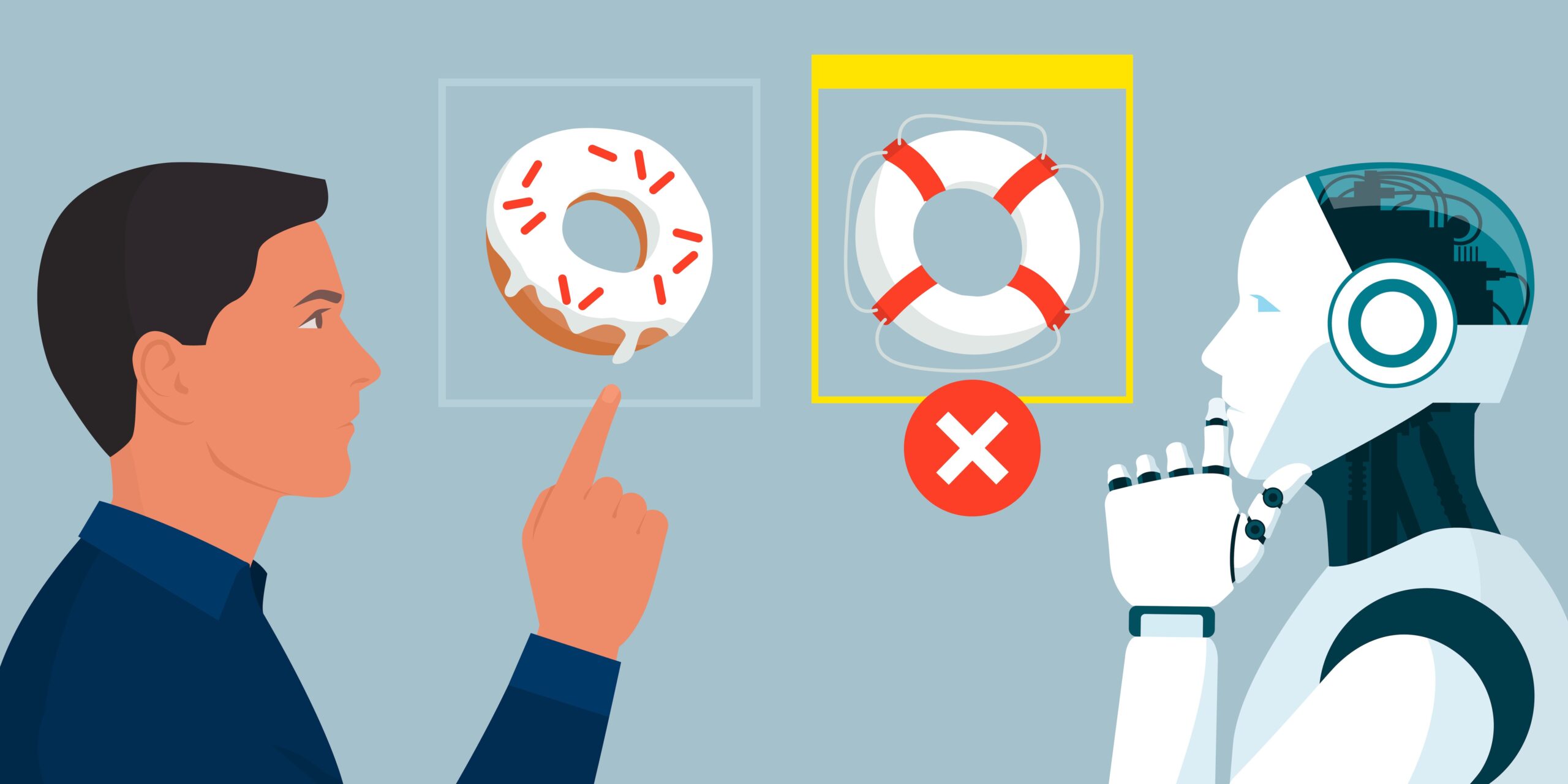

There’s a common belief that synthetic data is the ultimate solution for AI training. It’s fast, cheap, and already labeled—sounds like a dream, right? Well, not so fast.

Synthetic data can be incredibly useful, but only in specific cases. It’s not a one-size-fits-all solution. Here are a few situations where synthetic data can really shine:

Edge Cases: Sometimes, real-world data just can’t provide enough examples of rare scenarios. For instance, in defense applications, you might need images of camouflaged tanks in the desert. Real-world images are scarce, so synthetic data can step in to fill the gap.

Cost-Effectiveness: It’s often cheaper and faster to generate synthetic data than to collect and label real-world data from scratch.

Privacy Compliance: Synthetic data can help avoid privacy issues by generating data that doesn’t contain any sensitive information, which is a big plus in healthcare and finance.

Scalability: You can generate large volumes of synthetic data quickly, allowing for extensive testing and training of AI models.

That said, synthetic data isn’t without its risks. If you’re not careful, errors in synthetic data can multiply and derail your entire project. It’s essential to know when to use synthetic data and, just as importantly, when not to.

“Running on Empty”=

—Jackson Browne

Just after my conversation about synthetic data, I read an article in the Wall Street Journal about the limits of large language models (LLMs). The problem? LLMs can’t use their own data to scale up. They need human input to grow and improve. Without fresh, human-generated text, LLMs start to collapse into producing nonsense—a phenomenon known as “model collapse.”

In other words, AI needs humans. It’s a paradox, but it’s true: the more advanced AI gets, the more it depends on human input to stay on track. You can’t train AI models on AI-generated content. That’s like making a copy of a copy—it just doesn’t work. Even if only 1% of the data is synthetic, it can spoil an entire training model.

This is why companies like OpenAI have struck deals with content providers like Dow Jones and Hearst to ensure they have a steady supply of fresh, high-quality human prose. The future of AI may seem synthetic, but human involvement is still essential. Without humans, AI simply can’t evolve.

So, what’s the takeaway here? While synthetic data has its place, it will never fully replace human-in-the-loop labeling. AI needs the creativity, nuance, and logic that only humans can provide. Synthetic data can fill gaps and help in specific situations, but it can’t do the heavy lifting all on its own.

The future of AI lies in striking a balance between human-curated data and synthetic data. Both have their strengths, and both have their place in the AI ecosystem. As we move forward, it’s crucial to remember that AI isn’t just about technology—it’s about the humans who make it possible.

So, the next time someone tells you that synthetic data is the future, remind them: AI still needs us. And for now, human-in-the-loop labeling isn’t going anywhere.